What Are AI-Powered Cyber Attacks?

AI-powered cyber-attacks are an emerging threat in which attackers utilise AI to automate, adapt, and execute attacks in real time. These attacks are not like the traditional cyber threats, which adapt tactics dynamically, but can target multiple systems at once. AI-powered cyber attacks in 2026 are categorised into these four terms. First is automation, enabling the attackers to engage in mass-scale attacks without humans. Second is the issue of adaptation, in which attacks can change strategies depending on defences. Third is real-time decision making, i.e., the system can select the optimal attack path. Lastly, global scalability enables such attacks to have multiple systems across regions. These are AI-generated phishing, deepfake

impersonation, autonomous hacking systems (agentic AI), and adaptive malware, which are much more sophisticated and difficult to detect as compared to standard attacks.

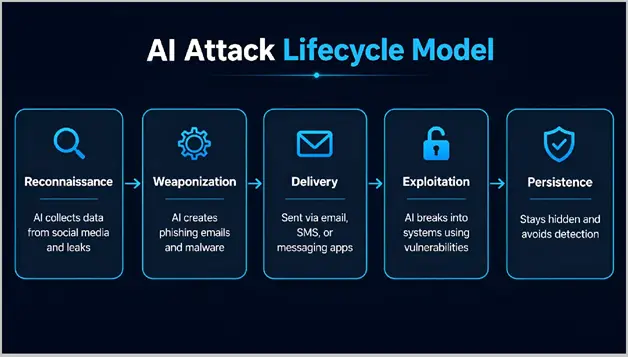

AI Attack Lifecycle Model

AI is not just used in one part of a cyber attack; it is used across the entire attack process. This makes the attack cycle faster and more effective than older methods.

Modern AI-powered cyber attacks follow a defined lifecycle:

1. Reconnaissance

Artificial intelligence obtains data from numerous sources, such as public records, social media, company websites, and leaked databases. It analyses this data to understand user behaviour, communication patterns, job roles, and system structures. This lets attackers identify high-value targets, map out how people in an organization are connected, and identify entry points with high accuracy.

2. Weaponization

AI generates attack tools such as malicious links and malware that can be used to attack. These are highly personalized because they use real context like recent emails, projects, or organizational data. AI can also generate multiple variations of the same attack and optimize them over time, which increases the chances of success that at least one of them will work.

3. Delivery

The attack is delivered via multiple platforms, such as email, text messaging, instant messaging and phone calls. AI uses user patterns and habits to determine the most suitable time, channel and type of message. It can also orchestrate attacks across multiple channels to make the attack more believable and more likely to succeed.

4. Exploitation

Once the target engages without human intervention, the AI attempts to access and exploit vulnerabilities, stolen credentials or malicious downloads. It can test different attack paths, switch techniques if blocked, and adapt in real time. This makes the attack more flexible in the attack and significantly increases the success rate compared to traditional methods.

5. Persistence

Once inside the system, AI maintains persistence within the system. It can remain hidden by blending into legitimate network traffic, bypass security measures, and stay persistent. Over time, it can escalate control, spy or steal information stealthily without raising the alarm.

Key Insight

AI can execute the entire attack lifecycle much faster than traditional methods, sometimes in just minutes. This makes it harder for security to detect and take action before it’s too late.

Classification of AI Cyber Attacks

Not all AI-powered cyber attacks are the same. To better understand these threats, they can be categorised based on the AI techniques used in the attack.

1. Generative AI Attacks

Generative AI is used to create content at scale in these attacks. This includes phishing emails, fake documents and also highly realistic synthetic media that is extremely realistic. The attackers can generate tailored messages that match the target’s role, language, and behaviour, and which are highly infeasible to spot.

2. Agentic AI Attacks

The Agentic AI attacks involve systems that can plan and execute multi-step attacks. These systems can analyze targets, choose the attack paths, and adapt their strategy automatically. This makes them more flexible and dangerous compared to the traditional scripted attacks.

3. Swarm AI Attacks

Multiple AI systems working together. Are involved in the Swarm AI attacks. Each system performs a specific task, and it may be any of the following: scanning targets, generating attacks or monitoring responses. These systems share information, and this renders the whole attack faster and more effective.

4. Deepfake Identity Attacks

The attacks that use AI-generated voice or video to impersonate individuals are known as deepfake identity attacks. They include common CEO fraud, voice scams, and identity-based attacks. They can bypass traditional trust and verification systems trust and verification process as they appear highly realistic.

| Type of AI Attack | Description | Example Use Case |

| Generative AI Attacks | AI creates content like phishing emails, fake documents, and media | Personalized phishing campaigns |

| Agentic AI Attacks | Autonomous systems that plan and execute multi-step attacks | Automated network intrusion |

| Swarm AI Attacks | Multiple AI systems working together and sharing data | Large-scale coordinated attacks |

| Deepfake Identity Attacks | AI-generated voice or video used to impersonate individuals | CEO fraud, voice scams |

Agentic AI: The Rise of Autonomous Cyber Attack Systems

One of the most advanced developments in modern cyber threats is Agentic AI. In contrast to traditional tools that operate according to predetermined algorithms, agentic AI systems are able to plan, execute, and adapt attacks autonomously. These systems are designed to achieve specific goals, which enables them to go beyond simple actions such as sending phishing emails or scripting. Rather, they manage entire attack chains from identifying targets and system analysis, as well as maintaining access control. As an example, an agentic AI system can analyze a target organization and pinpoint weak points in systems or users, launch different attack methods, a djust its strategy if something fails. This makes attacks more flexible and difficult to prevent.

Key Difference

Traditional cyber attacks are programmed based on known instructions and predictable methods. In contrast, agentic AI attacks are real-time decision-makers, making decisions based on their environment, which enables them to adjust their actions, attack path, and respond dynamically to different situations with the best approach.

Why It Matters

The role of humans is minimised due to agentic AI. This enables attackers to:

- Run continuous attacks without monitoring

- Operation on large-scale

- Quickly adapt to security

With the increase in the availability of this technology, even smaller threat actors can carry out highly advanced attacks.

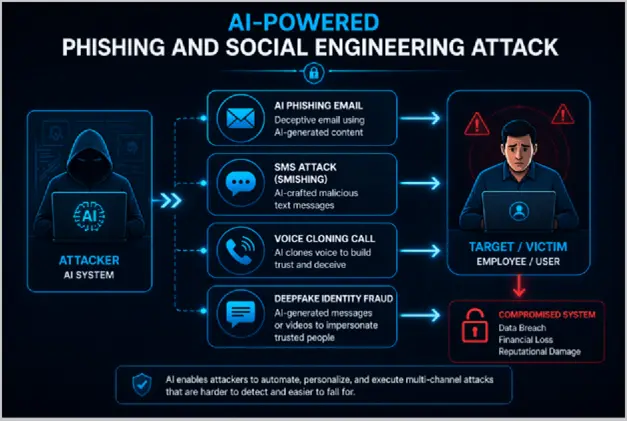

AI-Powered Phishing & Social Engineering Explosion

Social engineering and phishing attacks are getting smarter with artificial intelligence (AI). Hackers can develop complex, targeted and convincing messages. AI can use vast amounts of information from social media, emails and other online messages to generate messages that resemble the intended action, language and context.

Why AI Phishing Is More Dangerous

AI-powered phishing attacks are more effective since they are:

- Highly personalized: Messages are made for particular

- Context-aware: They make references to real individuals, projects, or occasions

- Language-accurate: Unlike traditional phishing, there are no grammatical errors

- Scalable: Immediate generation of thousands of personalised messages

Rise of Multi-Channel Attacks

Modern attacks are not just limited to emails because attackers can combine several channels using artificial intelligence, including:

- SMS (smishing)

- Voice calls (voice cloning)

- Messaging platforms

For example, a victim might receive:

- A realistic email from a manager

- A follow-up message on chat

- A voice call using a cloned

This layered approach increases trust and success rates.

Business Email Compromise (BEC) Evolution

BEC attacks are now riskier than ever because of the use of Artificial Intelligence. Attackers can now:

- Imitate executives using a deepfake voice

- Send perfectly written emails

- Create urgency to trigger quick decisions

These attacks often lead to financial loss or data breaches.

Key Insight

AI doesn’t just increase the volume of phishing attacks, but it also greatly increases the success rate by making them more believable and targeted.

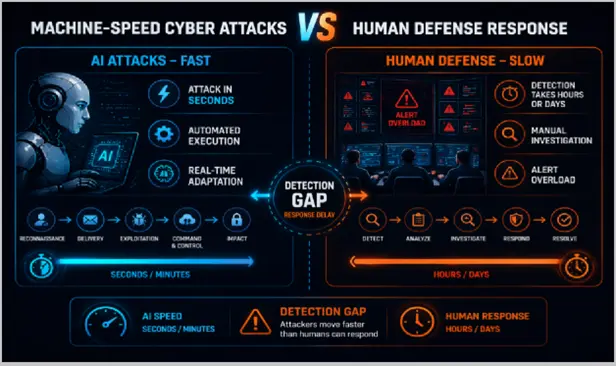

Machine-Speed Cyber Attacks vs Human Defence Gap

From the growing challenges one of the biggest challenges in modern cybersecurity is the gap between machine-speed attacks and human response time. AI-powered cyber attacks operate at machine speed. They can scan systems, build attacks and launch them in minutes. In some situations, the entire attack lifecycle, from initial access to data theft, can be completed before security teams even detect unusual activity. But most SOC (Security Operations Center) still rely on human analysts. These teams have to triage alerts, investigate threats and decide how to respond, all of which takes time.

The Core Problem

This creates a critical mismatch:

- Attack speed: Seconds to minutes

- Detection time: Hours to days

- Response time: Often delayed further

OC Overload and Alert Fatigue

Security Operations Centers (SOCs) receive thousands of alerts each day, the majority of which are false alarms, which makes it hard to detect actual threats in a prompt manner. This leads to alert fatigue, response times and false negatives. This is exploited by cyber criminals to generate distractions to mask malicious attacks and make it harder for security analysts to respond.

Why Speed Matters

When detection is delayed:

- Attackers gain deeper access

- Sensitive data can be stolen

- Systems can be compromised fully

By the time a response begins, the damage may already be done.

Key Insight

The real advantage of AI in cyberattacks is not intelligence, but speed. The speed of attacks reduces the response time, and conventional security measures are no longer effective.

AI Malware Evolution: Adaptive & Self-Learning Threats

In 2026, AI is transforming malware as Artificial Intelligence is creating malware that can learn, change and avoid detection in real time. Traditional malware used static code and signatures, and was relatively easier to detect and prevent. However, with AI-based malicious code, the code can be modified to match its environment. Here are some key characteristics of AI Malware:

Polymorphic Behavior

Malware that uses AI can change its code each time it is sent out, creating an evolving version of the malware. This makes it more difficult to detect and prevent the malware with signature-based tools.

Self-Modifying Code

It can modify part of its own code during execution to avoid detection or other security measures. This enables the malware to adapt to the system it is targeting.

Behaviour-Based Adaptation

AI malware observes how systems and security controls respond to various actions, and then changes itself to evade detection. It could slow or modify its behaviour to evade detection.

Evasion Techniques

It can mimic legitimate processes, slow down or reduce suspicious activities to avoid detection or triggering alerts. In extreme cases, it can blend in as part of the system’s normal operations, making it difficult for security professionals to identify.

Why This Is Dangerous

AI-based malware doesn’t just attack; it also learns from its surroundings on its own. This allows it to:

- Avoid detection systems

- Stay active for longer periods

- Increase the chances of successful attacks Key Insight

AI-powered malware moves the focus from “detecting known threats” to spotting unusual behaviour, making traditional security measures less effective.

Swarm AI & Multi-Agent Cyber Attack Systems

Swarm AI attacks are attacks that use multiple AI systems to launch cyber attacks. Instead of a single attacker, the systems operate in a network, with each AI system playing a role. One could scan for potential victims, one could develop a phishing email, and others could monitor the outcome and react.

How Swarm Attacks Work

- Multiple AI agents run simultaneously

- Each performs a specialized role

- Information is shared across the system

- The attack adapts collectively

Why This Is Dangerous

Swarm AI is very dangerous, being involved in cyber attacks as:

- Faster: Multiple tasks happen at the same time

- More scalable: Can target many systems at once

- Harder to stop: Even if one part is blocked, others continue Key Insight

Swarm AI makes cyber attacks more resilient and difficult to counter by creating a system of cyber attacks rather than individual attacks.

Real-World Impact of AI Cyber Attacks

AI cyber attacks are already widely affecting the industry. As attacks are becoming faster and more advanced, their impacts are becoming more serious and severe.

Financial Losses

AI-based attacks such as phishing and fraud are resulting in substantial financial losses. AI-powered Business Email Compromise (BEC) attacks enhanced by AI are leading to greater and more frequent losses.

Data Breaches

AI enables faster access and effective mobility in networks. It helps to steal information without being traced.

Critical Infrastructure Risks

Critical sectors such as health, finance, and energy are particularly at risk. Malicious AI-based attacks can disrupt essential services and pose significant risks.

Increasing Attack Success Rates

AI not only increases the volume of attacks, but also the success rate by making attacks more targeted, authentic and intelligent.

Key Insight

The real threat with AI cyber attacks is not just the volume, but the quality and the level of success of the attacks.

Conclusion

AI-powered cyber attacks are evolving the threat landscape by taking automation, speed and intelligence to the next level. Once the domain of elite hackers, AI is making cyber attacks more accessible to hackers with lesser technical expertise. This means businesses and consumers are facing a dynamic and evolving threat environment that demands more sophisticated security measures.

Cybersecurity must shift from static to dynamic strategies. That includes using AI-based security, user awareness and constant monitoring. The future of cybersecurity will be the ability to adapt to the pace and sophistication of AI-driven threats through constant innovation and vigilance.

Frequently Asked Questions

Yes, they are more vulnerable with less security. AI makes them easy to detect.

They enable scams like identity theft and fake messages. These attacks feel more real and convincing.

They have limited effectiveness. AI-based threats require behaviour-based security tools.

A major role. AI exploits human mistakes through realistic and targeted tricks.

They are harder to trace due to automation and anonymity. Advanced tools are improving tracking.

Yes, MaxProtect includes responsive technical support from certified security experts who are available around the clock to provide fast and reliable assistance. Additionally, personalized customer support is available via contact form or email during business days, with an aim to respond within 24 hours. Chat support is available Monday through Friday.